|

Registration of a mission video sequence with a reference image without any meta data (camera location, viewing angles, and reference DEMs) is still a challenging problem. This paper presents a layer-based approach to register a video sequence to a reference image of a 3D scene containing multiple layers. First, the robust layers from a mission video sequence are extracted and a layer mosaic is generated for each layer, where the relative transformation parameters between consecutive frames are estimated. Then, we formulate the image registration problem as a region partitioning problem, where the overlapping regions between two images are partitioned into supporting and non-supporting (or outlier) regions, and the corresponding motion parameters are also determined for the supporting regions. In this approach, we first estimate a set of sparse, robust correspondences between the first frame and reference image. Starting from corresponding seed patches, the aligned areas are expanded to the complete overlapping areas for each layer using a graph cut algorithm with level set, where the first frame is registered to the reference image. Then, using the transformation parameters estimated from the mosaic, we initially align the remaining frames in the video to the reference image. Finally, using the same partitioning framework, the registration is further refined by adjusting the aligned areas and removing outliers. Several examples are demonstrated in the experiments to show that our approach is effective and robust. Related Publications: Jiangjian Xiao and Mubarak Shah, “Layer-Based Video Registration”, Machine Vision and Application, Vol. 16, No.2, pp. 75-84, 2005. Jiangjian Xiao, Yunjun Zhang and Mubarak Shah, “Adaptive Region-Based Video Registration”, IEEE Workshop on Motion, Jan 5-6, Breckenridge, Colorado, 2005.

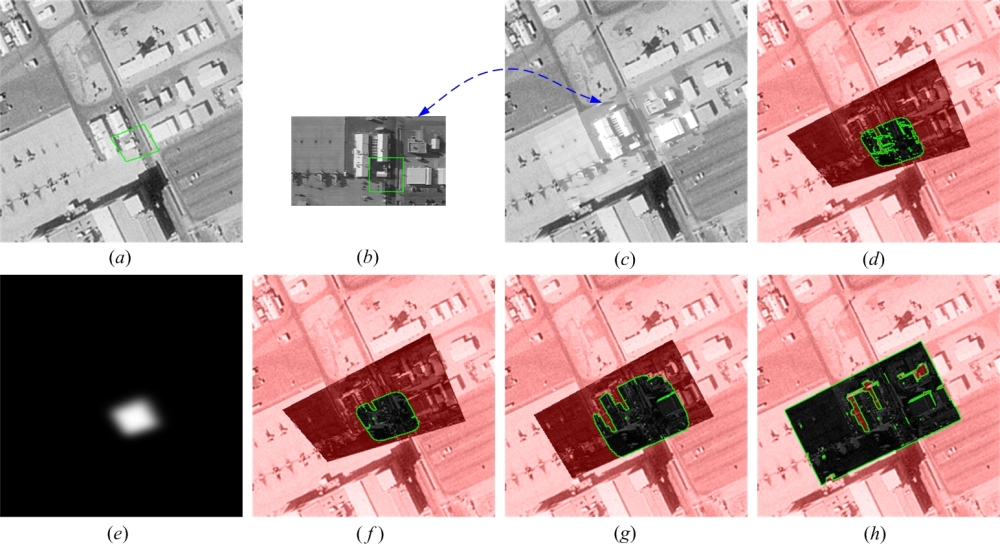

Figure 1. Region expansion for initial alignment. (a−b) initial corresponding patch contours in the reference and mission images respectively. (c) the final registration result, where the intensities of the embedded mission image are adjusted by illumination coefficients. (d) the simple expansion and partitioning started from the initial contour shown in (a). (e) the level set representation of the initial contour (a). (f−h) are intermediate results using the graphcut method with the level set representation, which can guarantee the expansion gradually evolves from the center to a boundary. Note: The green boxes in (a) and (b) are the initial seed regions. (f − h) are difference images between the warped (b) and (a), and the green contours in (f − h) are supporting region boundaries obtained after using bi-partitioning algorithm. The non-supporting pixels are masked by red.

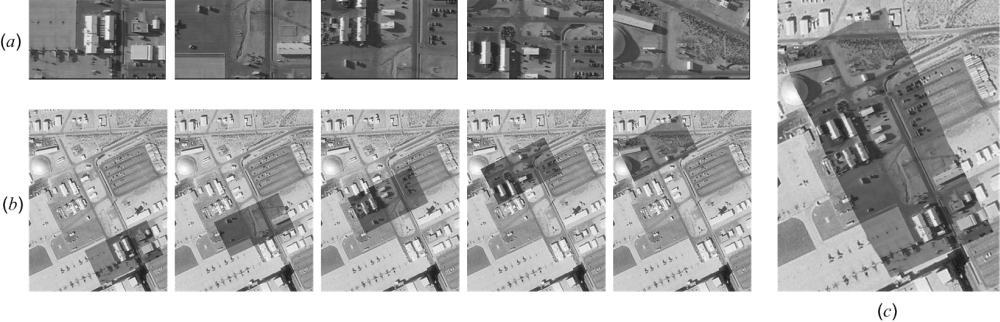

Figure 2. Video registration results. (a) mission video frames. (b) registration results for several frames, where the mission images are superimposed in the reference image. (c) full registration of all the mission video frames. |

|