Accepted Papers

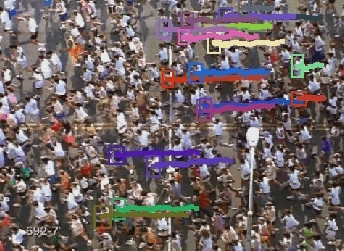

- MCMC-Based Tracking and Identification of Leaders in Groups, Avishy Y. Carmi, Lyudmila Mihaylova, Francois Septier, Sze Kim Pang, Pini Gurfil and Simon J. Godsill.

- Everybody needs somebody: Modeling social and grouping behavior on a linear programming multiple people tracker, Laura Leal-Taixe, Gerard Pons-Moll and Bodo Rosenhahn.

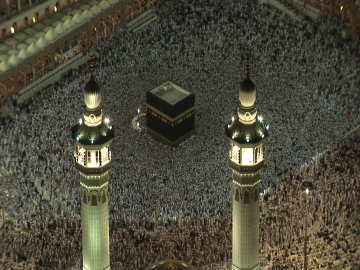

- Virtual Tawaf: A Case Study in Simulating the Behavior of Dense, Heterogeneous Crowds, Sean Curtis, Stephen J. Guy, Basim Zafar, and Dinesh Manocha.

- Optimizing Interaction Force for Global Anomaly Detection in Crowded Scenes, R. Raghavendra, Alessio Del Bue, Marco Cristani, Vittorio Murino.

- Analyzing Pedestrian Behavior in Crowds for Automatic Detection of Congestions, Barbara Krausz, Christian Bauckhage.

- Integrating pedestrian simulation, tracking and event detection for crowd analysis,Matthias Butenuth, Florian Burkert, Angelika Kneidl, André Borrmann, Florian Schmidt, Stefan Hinz, Beril Sirmacek.

- T-Junction: Experiments, Trajectory Collection, and Analysis, Maik Boltes, Jun Zhang, Armin Seyfried, Bernhard Steffen.

- Calibrating Dynamic Pedestrian Route Choice with an Extended Range Telepresence System, Tobias Kretz, Stefan Hengst, Vidal Roca, Antonia Perez Arias, Simon Friedberger, and Uwe D. Hanebeck.

- Lane Formation in a Microscopic Model and the corresponding Partial Differential Equation, Jan-Frederik Pietschmann, Barbel Schlake.

Call for Papers

Papers describing novel and original research are solicited in the areas related to visual analysis of crowded scenes. Topics of interest include but not limited to:- Single and Multi-camera Tracking in High Density Crowds

- Event Analysis in Crowded and Cluttered Scenes

- Group Activity Analysis

- Action Recognition in Crowds

- Applications of Visual Crowd Analysis Systems

- Crowd Flow Analysis

- Data Driven Crowd Simulation & Behavior Understanding

- Crowd Interaction Models and their Applications to Object Detection Tracking and Event Analysis

- Force based Models for Pedestrian Dynamics in Crowds.

- Image and Video Features for Crowd Modeling

- Datasets/ Model Validation/Calibration/Algorithm Testing/Annotation Techniques for Crowd Research

Workshop Goals

Problems related to analysis of crowded scenes arise in a variety of contexts. A surveillance system installed in a city center may be interested in detecting individual objects that traverse the crowded scene to bootstrap its tracking module. At another location, a similar system may be interested in counting the number of people or estimating the density of crowd. Furthermore in context of object tracking, following individual person, a group of people, or the entire crowd may be of interest. Similarly event recognition systems may be interested in understanding what is happening in a scene by collecting local as well as global crowd statistics. Developing mathematical models of crowd movement and people interaction for simulation and modeling purposes is yet another area of interest.

It is generally agreed that in low density environments the problems described above are well understood and relatively mature solutions exists to solve them. However computer vision research for moderate or high density environments is still in its early stages. Although attempts have been made in published literature to extend conventional computer vision algorithms designed for low density scenes in order to address some of the challenges of crowded scenes, these techniques alone appear insufficient to solve the new set of challenges posed by moderate to high density crowds.

In recent years an encouraging new development has been the emergence of crowd motion and interaction models, originally developed in sociology, and adopted by computer graphics scientist for simulating realistic crowd behaviors. These models, social force model being one of them, depict crowd motion and interaction and can be used for simulating different emergent behaviors among a large number of agents or humans. Such crowd simulation systems are used for architectural and urban planning, enhancing virtual or training environments, animation characters for movies and games, as well as online virtual worlds (e.g. Second Life). In addition, group of researchers and practitioners in architecture, civil and fire safety engineering, physics and mathematics have been working on pedestrian and evacuation dynamics, which addresses issues related to whether the crowd behavior in an emergency situation is predictable and what are the different patterns occurring in pedestrian flows based on common rules. Their main goal is modeling and simulation of pedestrian and crowd movement as well as the dynamical aspects of evacuation processes.

We believe computer vision research on visual analysis of crowds can greatly benefit by bringing together researchers from areas of computer vision, computer graphics, physics, and evacuation dynamics. Such a gathering will lay down a foundation for an integrated analysis-synthesis approach for crowd modeling, where complementary viewpoints and techniques from these areas are used to develop additional insight into crowd analysis, modeling and simulation problem. The focus will be on exchange of ideas on how to develop visual crowd analysis capabilities that make use of crowd simulation and evacuation dynamic techniques. As a byproduct, computer graphics and evacuation dynamics community will also benefit as this workshop will lead to improved methods for data-driven modeling, simulation and analysis of large-scale “heterogeneous crowds” using video recordings of real-world crowds.

We hope to address following scientific questions and challenges through the workshop:- What are the general principles that characterize complex crowd behavior of heterogeneous individuals?

- How can verifiable mathematical models of crowd motion and interaction can be developed based on these principles?

- How these general principles can be used to enhance performance of low level vision tasks such as object detection, tracking, and activity analysis in crowds?

- What are the possible problem areas that will benefit from simulation models for enhance video analysis capabilities (e.g. tracking, target acquisition across sensor gaps, and sensor hand-off techniques etc.).

- At what granularity level (micro, macro) should such analysis-synthesis approach be applied?